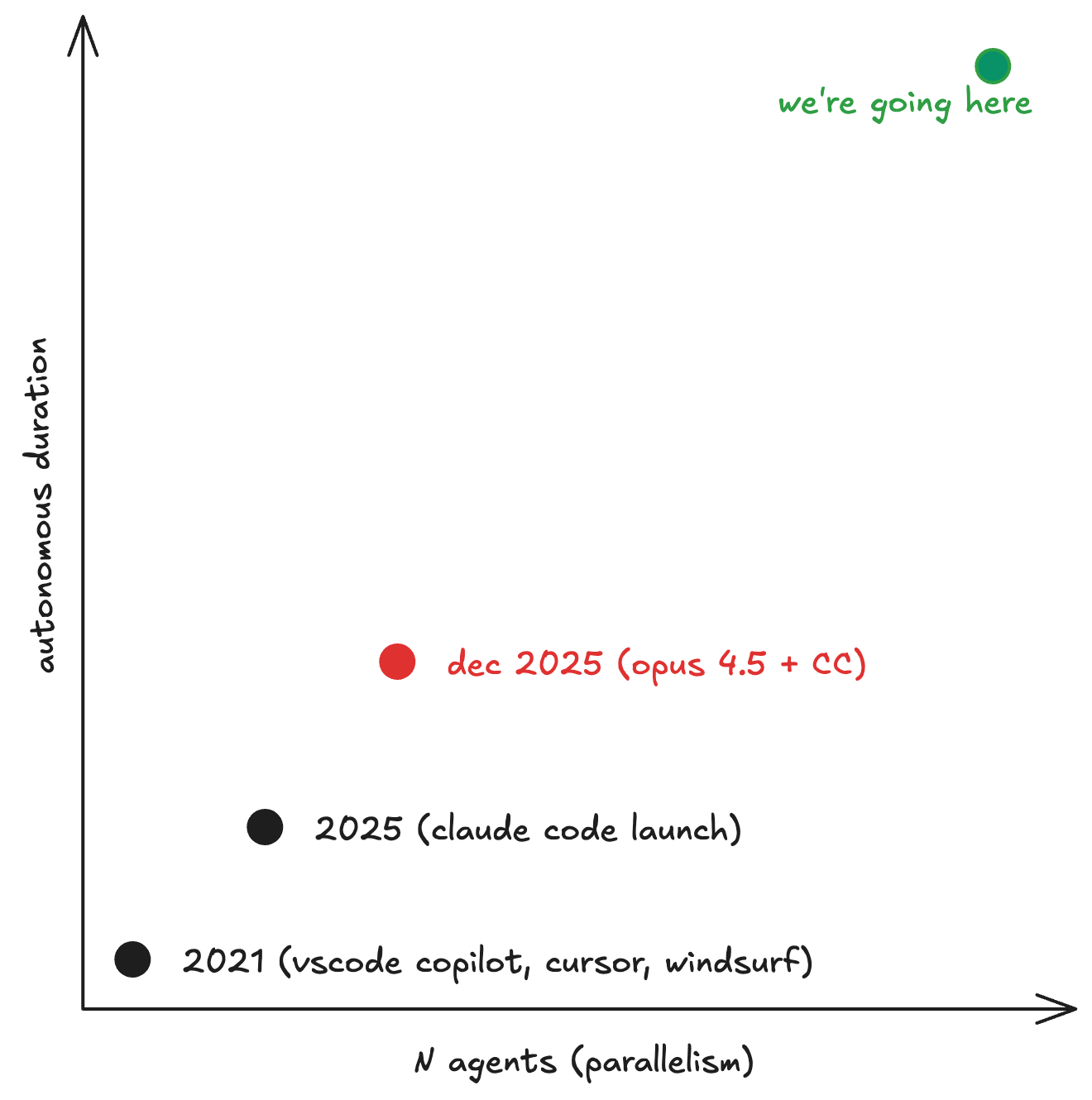

2025 was the year of agents. Claude Code made working with LLMs akin to pair programming with a very skilled but inexperienced junior developer. Some time in December 2025, with the release of Opus 4.5, a step-wise increase in capability became noticeable. Claude was able to work by itself for hours at a time.

The obvious next step is to tmux many Claude Code instances and have them work on separate issues.

This became so common that Anthropic began to refer to it as "multi-Clauding".

In Steve Yegge's parlance, this is level 6/7 of agentic coding.

tmux is great, but the cognitive overhead gets brutal.

You're constantly switching between idea generation, steering, and review.

It's clear that you are the bottleneck, and that it's a "skill issue" that you're not able to manage more agents.

Surely there's a better way.

So I built hive.

What is it

Hive is a single-process async Python orchestrator that coordinates multiple LLM coding agents against a SQLite queue, using git worktrees as execution sandboxes.

There are three moving parts. The Queen is a Claude session that acts as your project manager: you describe the work, she explores the codebase, proposes a decomposition into issues with dependencies, and waits for your approval. Workers are parallel Claude (or Codex) sessions, each in their own git worktree, picking issues off the queue and implementing them. The Merge Pipeline is a another agent that rebases, tests, and merges completed work back to main.

The core design principle: deterministic orchestration in Python, ambiguous decisions delegated to LLM sessions. State machine transitions, claiming, escalation? Python. Conflict resolution, test diagnosis, strategic decomposition? Claude.

Here's the full picture:

You

├─ CLI / Queen (TUI)

└─ Daemon (background)

↓

SQLite Database (~/.hive/hive.db)

├─ Issues (work queue)

├─ Dependencies (DAG)

├─ Agents (ephemeral identity)

├─ Events (audit trail)

├─ Notes (inter-agent knowledge)

└─ Merge Queue

↓

Orchestrator (async event loop)

├─ Main Loop (spawn workers when slots open)

├─ Event Consumer (status updates from backends)

└─ Merge Processor (per-project)

↓

Backend Pool

├─ Claude WS Backend (claude CLI via WebSocket)

├─ Codex App Server Backend (stdio protocol)

└─ Tau Backend (testing)

↓

Workers (each in its own git worktree)

The state machine

Every issue goes through a 7-state lifecycle. This is the most important thing to understand about hive, because every design decision flows from keeping this state machine correct.

OPEN ──(claim)──→ IN_PROGRESS ──(success)──→ DONE ──(merge)──→ FINALIZED

↑ │

└──(retry/switch)────┘

│

(exhausted)──→ ESCALATED

│

(manual)─────→ CANCELED

The escalation policy is a three-tier chain.

- Retry with the same agent, up to 2 attempts. Maybe the model just had a bad run.

- Agent switch, a fresh agent with a clean worktree, up to 2 switches.

- Escalate to a human.

There's also an anomaly detector: 3+ failures within a 10-minute window triggers immediate escalation, bypassing the retry budget. This catches systematic failures (broken test suite, missing dependency, provider issues) before they burn through tokens.

Claiming work

The ready queue is a SQL query that resolves the dependency DAG:

SELECT * FROM issues

WHERE status = 'open'

AND assignee IS NULL

AND type != 'epic'

AND NOT EXISTS (

SELECT 1 FROM dependencies d

JOIN issues blocker ON d.depends_on = blocker.id

WHERE d.issue_id = issues.id

AND blocker.status NOT IN ('done', 'finalized', 'canceled')

)

ORDER BY priority ASC, created_at ASC

An issue only becomes "ready" when all its blockers are resolved.

Claiming is a CAS-style atomic update: verify the issue is still open with no assignee, verify dependencies are still satisfied, then update in a single transaction. If two workers race for the same issue, one wins and the other gets False back.

The backend abstraction

This is the part I'm most pleased with.

The HiveBackend interface is ~15 methods covering session management (create_session, abort_session, get_session_status), communication (send_message_async, get_messages, reply_permission), and event streaming (on(event_type, handler), connect_with_reconnect()).

The orchestrator doesn't care which backend is running; it just calls the interface.

Why does this matter? The SOTA frontier models rotate their first-place podium spot every few months. And it's unclear how different models interact with different CLI wrappers (I hear GPT 5.4 in Claude Code is quite good). Being agnostic to both model and harness means the core orchestration code doesn't need to change as everything else rapidly iterates.

The merge pipeline

When a worker completes an issue, the work enters a merge pipeline.

Merges are handled by a dedicated Claude session called the "Refinery." The Refinery gets the conflict context or test output, resolves the issue, and writes a structured result file. It can merge the work (conflict resolved, tests passing), reject it (send it back to open for rework), or escalate to a human (too complex or ambiguous).

The Refinery is a long-lived session per project, reused across multiple merge operations. When it accumulates too much context (>100k tokens or >20 messages), hive cycles the session to keep it fresh. Each project gets its own MergeProcessor, so multi-project orchestration doesn't create cross-project merge contention.

Inter-agent knowledge transfer

Workers write .hive-notes.jsonl during execution:

{"category": "discovery", "content": "postgres dependency in main.py requires PGHOST to be set"}

{"category": "gotcha", "content": "tests require PYTHONPATH=src/"}

When a worker completes, hive harvests its notes and stores them in the database. When spawning a new worker on a related issue (same epic, same project), hive injects relevant sibling notes into the prompt.

This means if agent #1 discovers that the test suite needs a specific env var, agent #3 (working on a related task) will know that before it starts. Knowledge accumulates across the swarm without any agent needing to hold it all in context.

The Queen

The Queen is the main interaction point to the hive.

It's a Claude session running in your terminal (TUI mode).

You give it a spec, and then it explores the codebase, reads .hive/project-context.md for accumulated project knowledge, proposes a decomposition into issues with dependencies, waits for your approval, and then creates the issues and kicks off the daemon.

There's also a headless mode for scripting:

hive queen --headless -p "Bump all dependencies and update the lockfile"

The Queen is how you shift from steering individual agents to managing a project. You think about what needs to happen and how it decomposes.

Configuration and hackability

Hive uses a 4-layer config stack: built-in defaults → global TOML (~/.hive/config.toml) → project TOML (.hive.toml) → environment variables. The interesting knobs:

| Setting | Default | What it controls |

|---|---|---|

max_agents |

3 | Concurrent workers |

worker_model |

claude-sonnet-4-6 | Model for implementation |

refinery_model |

claude-opus-4-6 | Model for merge conflicts |

max_tokens_per_issue |

200,000 | Per-issue token budget |

max_retries |

2 | Retries before agent switch |

max_agent_switches |

2 | Switches before escalation |

backend |

claude | claude | codex |

test_command |

-- | Merge gate test command |

What I've learned

The codebase is about 6,500 LOC of Python. It's designed to run locally, use minimal resources, and be simple enough that you can read the whole thing in an afternoon and start hacking on it.

Some things I learned while working on hive:

SQLite is the right database for this. WAL mode gives you concurrent reads during writes and the busy_timeout pragma handles lock contention gracefully. You can make the entire coordination layer just SQL queries. For 3-20 concurrent agents, SQLite is more than enough, and the operational simplicity is worth a lot.

Git worktrees are still underrated. Each agent gets its own worktree of the repo, branching from main. They can't step on each other's files, and when the work is done, you rebase and merge. When it fails, you delete the worktree. The isolation is perfect and the cleanup is trivial.

I'm still uncertain about the notes system. It makes sense that agents discovering things about the codebase and sharing those discoveries with sibling agents should meaningfully reduces failure rates on related tasks. But it's hard to design tasks and benchmarks that measure this accurately. This is something a lot of future work should explore.

The code is at github.com/nwyin/hive. It's MIT licensed, and designed to be forked and hacked on. If you're managing 3+ agents in tmux and want something more structured, give it a look.